For more on this recursive scheme for computing square roots of triangular matrices see Blocked Schur Algorithms for Computing the Matrix Square Root (2013) by Deadman, Higham and Ralha. If you’d like to take a look at the code, type edit private/sqrtm_tri at the MATLAB prompt. The Sylvester equation is solved using an LAPACK routine, for efficiency. In this way the task of computing the square root of is reduced to the tasks of recursively computing the square roots of the smaller matrices and and then solving the Sylvester equation for. How does sqrtm_tri work? It uses a recursive partitioning technique.

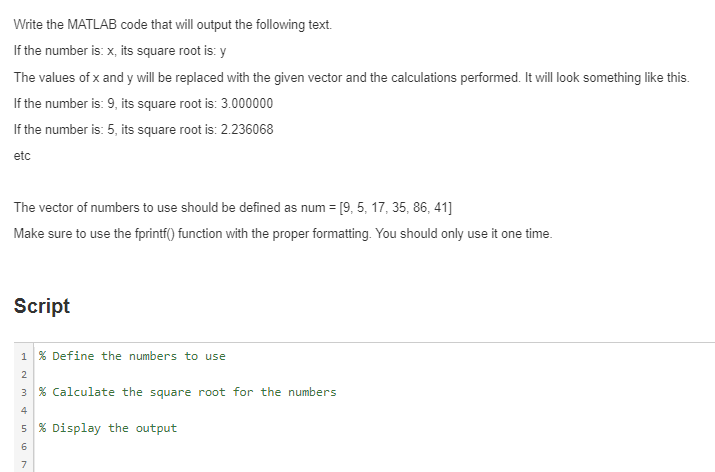

#MATLAB SQUARE ROOT CODE#

The slowdown for is for a combination of two reasons: the new code is more general, as it supports the real Schur form, and it contains function calls that generate overheads for small.

But for we already have a factor 8 speedup, rising to a factor 69 for. Shown are execution times in seconds for random triangular matrices an a quad-core Intel Core i7 processor. Here is a comparison of the underlying function sqrtm_tri (contained in toolbox/matlab/matfun/private/sqrtm_tri.m) with the relevant piece of code extracted from the old sqrtm. The new sqrtm introduced in MATLAB 2015b contains a new implementation of the Björck–Hammarling recurrence that, while still in M-code, is much faster. As a result, my sqrtm implemented the Björck–Hammarling recurrence in M-code as a triply nested loop and was rather slow. But while the inverse of a triangular matrix is a level 3 BLAS operation, and so has been very efficiently implemented in libraries, the square root computation is not in the level 3 BLAS standard. The importance of sqrtm has grown over the years because logm (for the matrix logarithm) depends on it, as do codes for other matrix functions, for computing arbitrary powers of a matrix and inverse trigonometric and inverse hyperbolic functions.įor a triangular matrix, the cost of the recurrence for computing is the same as the cost of computing, namely flops. My function also provided an estimate of the condition number of the matrix square root. The latter recurrence is perfectly stable. I pointed out the instability in a 1999 report A New Sqrtm for MATLAB and provided a replacement for sqrtm that employs a recurrence derived specifically for the square root by Björck and Hammarling in 1983. This approach can be unstable when has nearly repeated eigenvalues. The original sqrtm transformed to Schur form and then applied a recurrence of Parlett, designed for general matrix functions in fact it simply called the MATLAB funm function of that time. We make it unique in all cases by taking the square root of a negative number to be the one with nonnegative imaginary part. This square root is unique if has no real negative eigenvalues. In practice, it is usually the principal square root that is wanted, which is the one whose eigenvalues lie in the right-half plane. These two extremes occur when has a single block in its Jordan form (two square roots) and when an eigenvalue appears in two or more blocks in the Jordan form (infinitely many square roots). In fact, it has at least two square roots,, and possibly infinitely many. Recall that every -by- nonsingular matrix has a square root: a matrix such that. In this post I will explain how the recent changes have brought significant speed improvements. It was improved in MATLAB 5.3 (1999) and again in MATLAB 2015b. The MATLAB function sqrtm, for computing a square root of a matrix, first appeared in the 1980s.